Local running large language model privately

AI on Device - Local Assistant

What is it about?

Local running large language model privately

App Store Description

Local running large language model privately

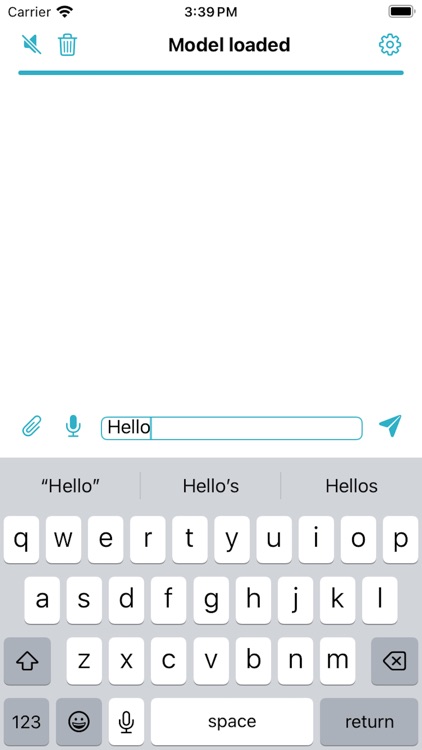

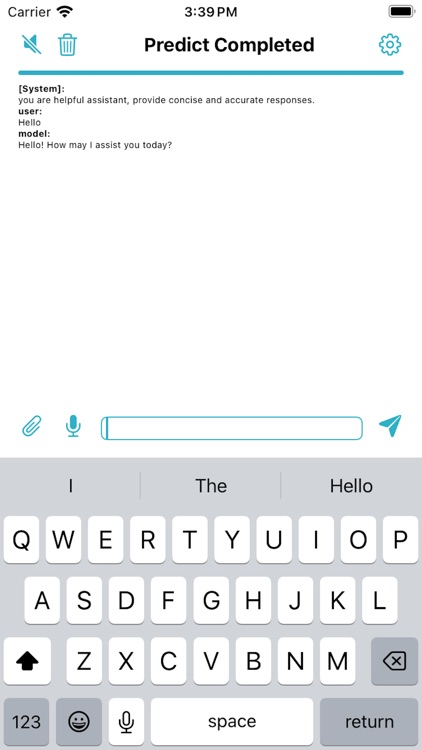

LocalAI – Experience the power of large language models (LLMs) directly on your device! With LocalAI, your privacy is prioritized, enabling you to explore the capabilities of various language models without needing an internet connection.

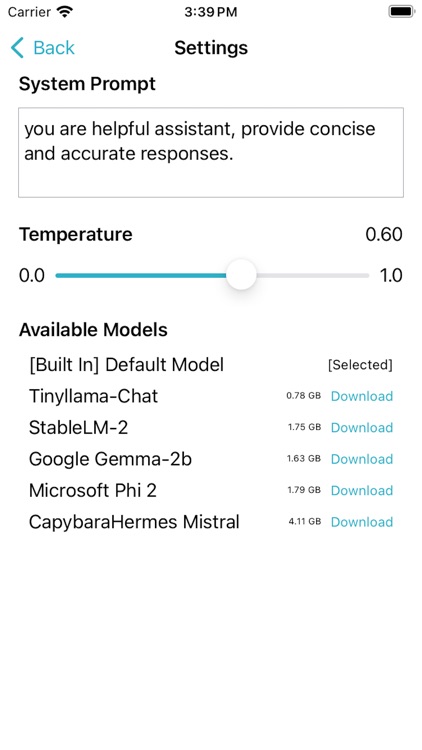

- Run Locally: Utilize popular models such as Google Gemma, Mistral, Tinyllama, and StableLM, right on your iOS, macOS, or iPad Vision Pro devices.

- Customization at Your Fingertips: Tailor settings for system prompts and model temperature to suit your specific needs and preferences.

- Future-Ready: Stay tuned for exciting upcoming features that will enhance your experience and expand the app’s capabilities.

- Designed for Everyone: Whether you're a developer, researcher, or just a curious mind, LocalAI offers a seamless and flexible environment to explore and interact with cutting-edge language models locally.

Privacy and Independence:

LocalAI ensures complete data privacy and independence from the internet, making it ideal for sensitive or offline scenarios.

AppAdvice does not own this application and only provides images and links contained in the iTunes Search API, to help our users find the best apps to download. If you are the developer of this app and would like your information removed, please send a request to takedown@appadvice.com and your information will be removed.